When it comes to modern computers, a wide variety of words and meanings do not exist in other settings, and some seem strikingly similar to the untrained ear.

A bit and a byte are two examples of similar words with different meanings; bits, in particular, have many definitions, reflecting the various methods by which computer data is measured. Bits and bytes are computer memory storage units.

Key Takeaways

- Bits are the smallest units of digital data, whereas bytes consist of 8 bits.

- A bit is an abbreviation for ‘binary digit,’ while a byte stands for ‘binary term.’

- Bytes are more commonly used in data measurement than bits due to their size.

Bit vs Byte

Bit is the acronym for binary digit, while Byte stands for Binary Element String. A bit is the smallest unit of data that can be represented in computers, while a byte consists of 8 bits. Maximum 2 values can be represented with a bit, while a byte can represent 256 different values.

A bit is an abbreviation for a Binary Digit. In other words, the only two numbers in binary are a 0 and a 1.

When it comes to programming, bits are very tiny and are only rarely utilized in such situations as these (although it can and does happen).

Our computer communicates in digital form, turning information into bits (short for binary digits), which are nothing more than a collection of 0’s and 1’s, which are used to represent the information.

A byte is described as “a unit of memory or data equal to the amount of data required to represent one character; on contemporary architectures, this is always 8 bits.”

In other words, a byte is the amount of information contained in a single character’s worth of characters. In this case, any value between 0 and 255 would suffice.

Comparison Table

| Parameters of Comparison | Bit | Byte |

|---|---|---|

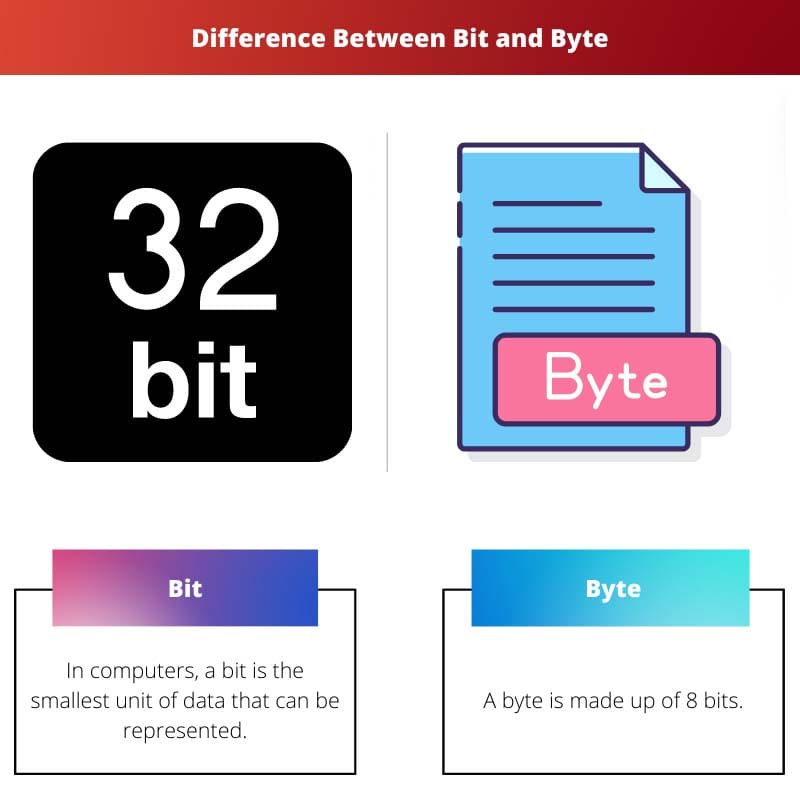

| Size of Unit | In computers, a bit is the smallest unit of data that can be represented. | A byte is made up of 8 bits. |

| Values | A maximum of two values may be expressed using a bit. | A byte may hold 256 distinct values. |

| Represented | Lowercase b. | Uppercase B. |

| Storage | Only 1’s and 0’s are stored in the computer’s memory. | The alphabet and additional special characters are all covered. |

| Different Sizes | A kilobit (kb), megabit (Mb), gigabit (Gb), terabit (Tb) | A kilobyte (KB), megabyte(MB), gigabyte (GB), terabyte (TB) |

What is Bit?

Computers are electrical devices that can only handle discrete data. Consequently, every kind of data that the computer wishes to work with gets turned into numbers in the end.

Computers, on the other hand, do not represent numbers in the same manner that we do. We utilize the decimal system, which uses ten digits to represent numbers (0, 1, 2, 3, 4, 5, 6, 7, 8, 9).

Modern computers utilize a two-digit binary format to represent numbers (0 and 1).

A bit is just a smaller unit of information than a byte, according to the most basic definition.

That process is reflected in this symbol, which represents one unit of information representing either a zero (no charge) or a one (full charge) (a completed, charged circuit).

One byte of information is made up of eight bits of information.

Bits (and their progressively bigger cousins, such as kilobits, megabits, and gigabits) are used to quantify data transmission speeds as an alternative. They are more frequently employed in contemporary meanings than in bygone generations. “Mbps” is one of the most misunderstood abbreviations in all of contemporary computing since it refers to “megabits,” not “megabytes” per second, as the name implies.

Bitwise Operations

In computer programming, bitwise operations are fundamental actions manipulating individual bits in a bit string, bit array, or binary numeral. The processor directly supports these operations, making them fast and simple compared to higher-level arithmetic operations.

When working with bits and bytes, you will encounter five primary bitwise operations: AND, OR, XOR, NOT, and shift operations. Understanding these operations can greatly enhance your programming and data manipulation skills.

- Bitwise AND (&): This operation compares each bit of the first operand with the corresponding bit of the second operand. If both bits are 1, the corresponding result bit is set to 1; otherwise, the result bit is set to 0.

- Bitwise OR (|): The OR operation compares each bit of the first operand with the corresponding bit of the second operand. If either of the bits is 1, the corresponding result bit is set to 1; otherwise, the result bit is set to 0.

- Bitwise XOR (^): The XOR operation is similar to the OR operation, but with a slight difference. In this case, the result bit is set to 1 if only one of the corresponding bits is 1, but not both.

- Bitwise NOT (~): The NOT operation is a unary operation, meaning it requires only one operand. It inverts the bits of its operand, changing 1s to 0s and 0s to 1s.

- Shift operations: There are two types of shift operations – left shift (<<) and right shift (>>). The left shift operation moves bits to the left by a specified number of positions, while the right shift operation moves bits to the right by a specified number of positions.

What is Byte?

A byte is an eight-bit representation of information, and it is the most frequently used word for referring to the quantity of information that may be kept in a computer’s memory.

A computer system’s “eight bits” does not refer to “eight bits” in a broad, purely mathematical sense but rather to a collection of eight bits that function as a cohesive unit inside the computer system.

It was during the creation of the IBM Stretch computer that the byte was given its first official designation in 1956. A byte is a data unit that consists of eight bits of information.

One byte may represent 28=256 different values, a very large number.

Whenever a word is shortened, the first letter of the word is capitalized to distinguish it from its smaller relative; for example, “Gb” is short for “gigabit,” while “GB” is short for “gigabyte.”

Bytes’ Different Multiples

Kilobyte

A Kilobyte (KB) is multiple bytes, with one kilobyte comprising 1,024 bytes. It is commonly used to measure the size of small files, documents, and images. For example, a typical text file might be around a few kilobytes. Sometimes, it is approximated as 1,000 bytes for simplicity, but the correct value is 1,024.

Megabyte

A Megabyte (MB) is another multiple of bytes, equal to 1,024 kilobytes, or 1,048,576 bytes. Megabytes are commonly used to measure the size of larger files, such as images, music files, and software applications. For instance, an average song file in the MP3 format may require a few megabytes of storage space.

Gigabyte

A Gigabyte (GB) is a unit larger than megabytes, consisting of 1,024 megabytes, or 1,073,741,824 bytes. Gigabytes measure even larger data sizes, such as video files, large software applications, and hard drive capacities. For example, a common smartphone may have around 64 or 128 gigabytes of storage capacity.

Terabyte

A Terabyte (TB) unit is even larger than gigabytes. One terabyte comprises 1,024 gigabytes, or 1,099,511,627,776 bytes. Terabytes measure large data sizes, including hard drive capacities, data center storage, and enterprise-level data backups. For instance, modern external hard drives offer storage spaces in 1 to 8 terabytes.

Main Differences Between Bit and Byte

- When it comes to computers, a bit is the smallest unit of data that can be represented, while a byte is eight bits.

- A bit may be used to represent a maximum of two values at a time, whereas A byte may store up to 256 different values.

- A bit is represented in lowercase b, whereas Byte is represented in uppercase B.

- Bits are used to store just 1s and 0s in the computer’s memory, while bytes are used to store the whole alphabet plus any extra special characters.

- A bit has different sizes such as s kilobit (kb megabit (Mb), gigabit (Gb) terabit (Tb), whereas Byte has kilobyte (kb) megabyte (MB), Gigabyte (GB) is terabyte (TB)

Conversion of Bits to Bytes

In the world of digital data, bits and bytes are two important units of measurement. A bit represents the smallest unit of digital data, with a value of either 0 or 1. A byte is slightly larger and consists of 8 bits. You might need to convert between these two units when working with data. In this section, we will guide you through converting bits to bytes.

To convert bits to bytes, divide the number of bits by 8. For example, if you have 16 bits, dividing by 8 gives you 2 bytes. Here’s the simple formula for converting bits to bytes:

Bytes = Bits / 8

Let’s go over a few examples so you can see how this conversion works in practice:

- If you have 32 bits, divide 32 by 8, which gives you 4 bytes.

- If you have 64 bits, divide 64 by 8, which gives you 8 bytes.

- If you have 128 bits, divide 128 by 8, which gives you 16 bytes.

Remember that when dealing with decimal results, it’s important to consider the context in which you work. For instance, in situations where only whole bytes are allowed (such as file sizes or memory capacities), you may need to round up to the nearest whole number.

Conversely, if you need to convert bytes back to bits, multiply the number of bytes by 8. Here’s the formula for converting bytes to bits:

Bits = Bytes × 8

Remember that conversion between bits and bytes is a straightforward process and can easily be performed using these simple formulas. With a clear understanding of the conversion process, you’ll be better equipped to work with digital data in various contexts, whether storage capacities, file sizes, or data transmission speeds.

Common Misconceptions About Bits and Bytes

One common misconception about bits and bytes is that people confuse them with each other. In reality, a bit is a computer’s smallest unit of information, representing a single binary value of either 0 or 1. On the other hand, a byte is a unit of digital information consisting of 8 bits. This makes a byte larger than a bit and capable of storing more complex information.

Another misconception is the assumption that data storage and data transmission speed are both measured in the same units. This is incorrect, as data storage is measured in bytes (KiloBytes, MegaBytes, GigaBytes), while data transmission speeds, such as internet bandwidth, are measured in bits (kilobits, megabits, gigabits). This distinction is important for understanding a system’s actual size or speed.

Additionally, people mistake the abbreviations for bits and bytes. It’s essential to know that the uppercase “B” represents bytes (e.g., MB for MegaBytes), while the lowercase “b” represents bits (e.g., Mb for Megabits). This slight difference in notation can significantly impact the meaning of a given value, especially when comparing storage or internet speeds.

To further clarify, here’s a quick summary:

- 1 bit = 0 or 1 (smallest unit of information)

- 1 byte = 8 bits (larger unit, used for data storage)

- Data storage: measured in bytes (e.g., GB for GigaBytes)

- Data transmission speeds: measured in bits (e.g., Gb for gigabits)

- Abbreviations: uppercase “B” for bytes, lowercase “b” for bits

- https://ieeexplore.ieee.org/abstract/document/149518/

- https://link.springer.com/chapter/10.1007/0-387-28327-7_20

The article does a great job in elucidating the significance of bits and bytes in computer systems, and how they are intertwined with data representation and memory storage.

Indeed, the article offers valuable insights into the fundamental role of these units in digital information processing.

Absolutely, understanding bits and bytes is crucial in comprehending the workings of computer memory and data manipulation.

This article provides clear and concise information about bits and bytes. The detailed comparison table makes it easy to grasp the distinctions between the two.

Absolutely, the comparison table is particularly helpful in understanding the nuances of bits and bytes.

The article offers a thorough exploration of bit and byte representation, adding depth to our understanding of digital data units and memory storage.

Indeed, the article’s emphasis on data units and memory representation is valuable for individuals seeking to grasp the fundamentals of computer science.

The article’s detailed explanation of bit and byte representation in computer systems provides valuable insights for individuals interested in the field of information technology.

Absolutely, the article is a great resource for anyone seeking clarity on the foundational aspects of computing.

The article’s coverage of bitwise operations adds depth to the discussion about bits and bytes, providing a well-rounded understanding of these fundamental concepts.

Absolutely, the article delves into the practical applications of bitwise operations, enhancing our comprehension of data manipulation in computing.

Indeed, understanding bitwise operations is crucial for developers and programmers working with low-level data processing.

The article effectively highlights the differences between bits and bytes, shedding light on their relevance to data representation in computers.

The article’s in-depth coverage of bitwise operations and their significance in programming and data manipulation provides valuable insights for aspiring developers and IT professionals.

Absolutely, the article’s focus on bitwise operations enhances our understanding of low-level computational processes.

Indeed, the detailed discussion about bitwise operations adds depth to our knowledge of programming and data manipulation in IT.

The article’s explanation about the fundamental concepts of bits and bytes is enlightening, especially for individuals new to the field of computing.

I agree. The article effectively demystifies the complexities of bits and bytes, making it accessible for beginners.

The article provides an insightful perspective on the role of bitwise operations, augmenting our understanding of low-level data manipulation and processing in computing.

Absolutely, the nuanced discussion about bitwise operations enriches our knowledge of data processing in computing environments.

The article provides a comprehensive explanation about bits and bytes, making it easier to understand their representation and usage in computers.

That’s true! It’s important to understand these fundamental units of data in computing.